Accuracy is fundamental to the process of scientific measurement, we expect our gizmos and sensors to deliver data that is both robust and precise. If accurate data are available, reliable inferences can be made about whatever you happen to be measuring, these inferences inform understanding and prediction of future events. But absence of accuracy is disastrous, if we cannot trust the data then the rug is pulled out from under the scientific method.

Having worked as a psychophysiologist for longer than I care to remember, I’m acutely aware of this particular house of cards. Even if your ECG or SCL sensor is working perfectly, there are always artefacts that can affect data in a profound way: this participant had a double-espresso before they came to the lab, another is persistently and repeatedly scratching their nose. Psychophysiologists have to pay attention to data quality because the act of psychophysiological inference is far from straightforward*. In a laboratory where conditions are carefully controlled, these unwelcome interventions from the real-world are handled by a double strategy – first of all, participants are asked to sit still and refrain from excessive caffeine consumption etc., and if that doesn’t work, we can remove the artefacts from the data record by employing various forms of post-hoc analyses.

Working with physiological measures under real-world conditions, where people can drink coffee and dance around the table if they wish, presents a significant challenge for all the reasons just mentioned. So, why would anyone even want to do it? For the applied researcher, it’s a risk worth taking in order to get a genuine snapshot of human behaviour away from the artificialities of the laboratory. For people like myself, who are interested in physiological computing and using these data as inputs to technological systems, the challenge of accurate data capture in the real world is a fundamental issue. People don’t use technology in a laboratory, they use it out there in offices and cars and cafes and trains – and if we can’t get physiological computing systems to work ‘out there’ then one must question whether this form of technology is really feasible.

With these issues in my mind, I’ve been watching the discussions and debates around the accuracy of wearable sensors with considerable interest. These wrist-worn devices incorporate a PPG (photoplethysmogram) designed to measure heart rate. From the perspective of a psychophysiologist, heart rate is perhaps the easiest signal to measure in the real world with minimal intrusiveness or discomfort for the individual. In some ways, I see it as an early test case for physiological computing in the real world, if one can’t measure a gross signal like heart rate with a high degree of accuracy outside of the laboratory, then one wonders about our capability to accurately measure any signal at all. As a secondary issue for this article, but a topic of major importance in its own right, medical professionals are also asking the same question about wrist-worn sensors; they wish to know whether they can use wearable data for monitoring existing patients or triaging new ones (see this Quora discussion as an example).

Looking at current research, there is good news and bad news. This study from January 2017 compared five devices over fifty adults at rest and during exercise with a medical ECG (electrodes attached to the chest). Unsurprisingly, the Polar H7, which is also a chest strap, delivered the highest correlation (.99, range = .991-.987) with a medical ECG. An Apple Watch was not as good (.91, .884-.929) but outperformed both the Fitbit Charge HR (.84, .791-.872) and Basis Peak (.83, .779-.865). The main culprit for reduced accuracy as we move from chest-worn sensors to wrist-worn ones was physical activity. The same pattern was observed in this paper from the Annals of Internal Medicine (April 2017) and this open source paper from the Journal of Personalised Medicine (May 2017). The good news is that wrist-worn wearables are fairly accurate if the person remains in a stationary position. Of course, they become less so once the person begins to move around – and given that many are marketed to support exercise, you can understand why some customers got angry enough about the accuracy of their data to bring a class action against Fitbit.

With respect to those physiological computing applications where the person remains in a seated position, it’s good to know that a high standard of data accuracy is achievable with minimal intrusion for the user. For applications where the person is ambulatory, such as AR and VR, there is obviously work to do. Or perhaps I am reading too much into data obtained from first generation technology, because there are other ways to access the same data, from remote webcam monitoring of heart rate to smart textiles.

The thing I find most interesting about the issue of data accuracy and wrist-worn wearables is the way in which manufacturers chose to present data at the interface. Like scientists, customers wish to use personal data to make accurate measurements, draw inferences and make predictions about future health. The manufacturers, perhaps anticipating customers’ desire for accuracy, chose to present heart rate data in the same way that it would appear to a patient in a hospital, as an incontrovertible source of feedback. Personal experience with the recently-discontinued Microsoft Band (another wrist-worn wearable) revealed a data stream representing the accuracy of heart rate data. In other words, the device was capable of generating a score that perhaps (we never found out) reflected the quality of contact between PPG sensor and the skin, and by default, the degree of confidence in the resulting heart rate data.

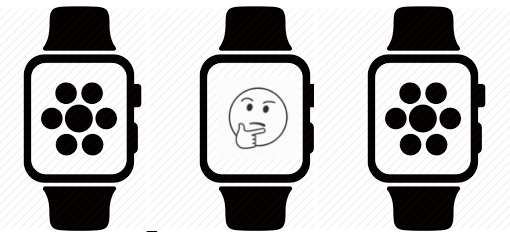

I think it’s fair to assume that similar devices have the same capacity, so from the perspective of interface design, why not make the user aware of signal quality? Why not have the display change colour if contact and data quality was compromised? It could be argued that accuracy feedback would reduce customer confidence or simply make the wearable device look bad. But a user armed with this level of feedback could experiment with placement of the device or learn to strap the device very tightly when running or in the gym. Legal challenges, like the class action case mentioned earlier, stem from customers’ overestimation of sensor accuracy, a misunderstanding arising from the absence of any feedback to the contrary.

Perhaps it is time for manufacturers working on the next generation of wearable devices to consider the concept of ‘intelligent wearables.’ These sensors are capable of self-diagnosing contact problems and able to give feedback when data accuracy is degraded for any reason. One feels this is less of a technical innovation than a presentational challenge for the marketing department, because we know that some existing sensors routinely monitor data quality. Intelligent wearables are an absolute necessity for the closed-loop logic of a physiological computing system, which needs to know when data cannot be trusted and any adaptive response must be placed ‘on hold.’

Being able to detect and disregard ‘bad epochs’ of data is equally important for the customers of wearable sensors who have their own reasons for collecting personal data. Like any scientist, they want to make inferences and predictions on the basis of accurate data.

* if you don’t believe me, please read this classic paper by Cacioppo et al

Pingback: Outdoors + Tech newsletter – November 13, 2017 | Sports.BradStenger.com