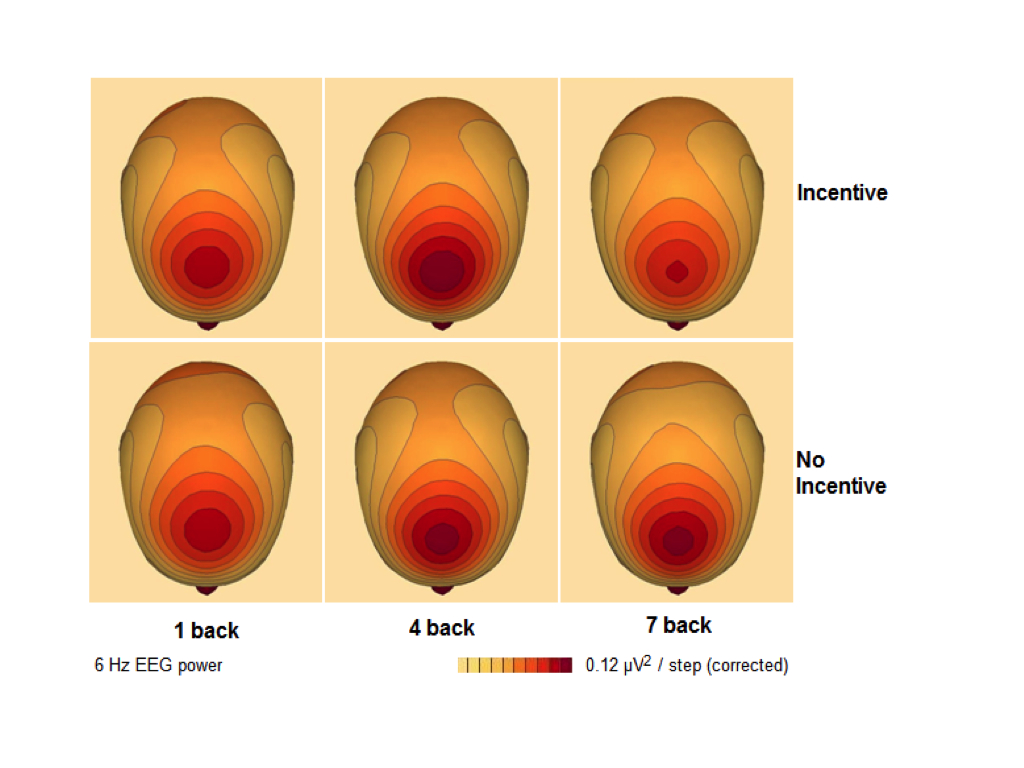

Back in 2003, Lawrence Hettinger and colleagues penned this paper on the topic of neuroadaptive interface technology. This concept described a closed-loop system where fluctuations in cognitive activity or emotional state informs the functional characteristics of an interface. The core concept sits comfortably with a host of closed-loop technologies in the domain of physiological computing.

One great insight from this 2003 paper was to describe how neuroadaptive interfaces could enhance communication between person and system. They argued that human-computer interaction currently existed in an asymmetrical form. The person can access a huge amount of information about the computer system (available RAM, number of active operations) but the system is fundamentally ‘blind’ to the intentions of the user or their level of mental workload, frustration or fatigue. Neuroadaptive interfaces would enable symmetrical forms of human-computer interaction where technology can respond to implicit changes in the human nervous system, and most significantly, interpret those covert sources of data in order to inform responses at the interface.

Allowing humans to communicate implicitly with machines in this way could enormously increase the efficiency of human-computer interaction with respects to ‘bits per second’. The keyboard, mouse and touchscreen remain the dominant modes of input control by which we translate thoughts into action in the digital realm. We communicate with computers via volitional acts of explicit perceptual-motor control – the same asymmetrical/explicit model of HCI holds true for naturalistic modes of input control, such as speech and gestures. The concept of a symmetrical HCI based on implicit signals that are generated spontaneously and automatically by the user represents a significant shift from conventional modes of input control.

This recent paper published in PNAS by Thorsten Zander and colleagues provides a demonstration of a symmetrical, neuroadaptive interface in action.