Accuracy is fundamental to the process of scientific measurement, we expect our gizmos and sensors to deliver data that is both robust and precise. If accurate data are available, reliable inferences can be made about whatever you happen to be measuring, these inferences inform understanding and prediction of future events. But absence of accuracy is disastrous, if we cannot trust the data then the rug is pulled out from under the scientific method.

Having worked as a psychophysiologist for longer than I care to remember, I’m acutely aware of this particular house of cards. Even if your ECG or SCL sensor is working perfectly, there are always artefacts that can affect data in a profound way: this participant had a double-espresso before they came to the lab, another is persistently and repeatedly scratching their nose. Psychophysiologists have to pay attention to data quality because the act of psychophysiological inference is far from straightforward*. In a laboratory where conditions are carefully controlled, these unwelcome interventions from the real-world are handled by a double strategy – first of all, participants are asked to sit still and refrain from excessive caffeine consumption etc., and if that doesn’t work, we can remove the artefacts from the data record by employing various forms of post-hoc analyses.

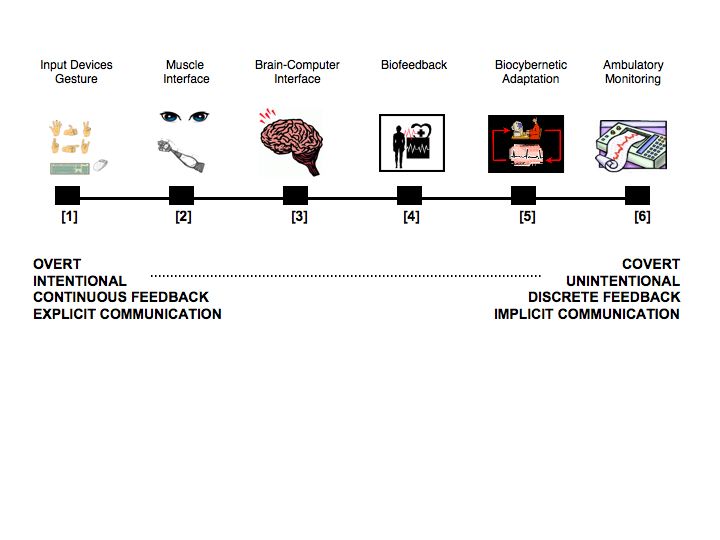

Working with physiological measures under real-world conditions, where people can drink coffee and dance around the table if they wish, presents a significant challenge for all the reasons just mentioned. So, why would anyone even want to do it? For the applied researcher, it’s a risk worth taking in order to get a genuine snapshot of human behaviour away from the artificialities of the laboratory. For people like myself, who are interested in physiological computing and using these data as inputs to technological systems, the challenge of accurate data capture in the real world is a fundamental issue. People don’t use technology in a laboratory, they use it out there in offices and cars and cafes and trains – and if we can’t get physiological computing systems to work ‘out there’ then one must question whether this form of technology is really feasible.