A quick post to alert people to the first forum for the Community for Passive BCI Research that take place from the 16th to the 18th of July at the Hanse Institute for Advanced Study in Delmenhorst, near Bremen, Germany. This event is being organised by Thorsten Zander from the Berlin Institute of Technology.

The main aim of the forum in his own words “is to connect researchers in this young field and to give them a platform to share their motivations and intentions. Therefore, the focus will not be primarily set on the presentation of new scientific results, but on the discussion of current and future directions and the possibilities to shape the community.”

Book Announcement – Advances in Physiological Computing

It was way back in 2011 during our CHI workshop that we first discussed the possibility of putting together an edited collection for Springer on the topic of physiological computing. It was clear to me at that time that many people associated physiological computing with implicit monitoring as opposed the active control that characterised BCI. When we had the opportunity to put together a collection, one idea was to extend the scope of physiological computing to include all technologies where signals from the brain and the body were used as a form of input. Some may interpret this relabelling of physiological computing as an all-inclusive strategy as a provocative move. But we did not take this option as a conceptual ‘land-grab’ but rather an attempt to be as inclusive as possible and to bring together what I still perceive to be a rather disparate and fractured research community. After all, we are all using psychophysiology in one form or another and share a common interest in sensor design, interaction mechanics and real-time measurement.

The resulting book is finally close to publication (tentative date: 4th April 2014) and you can follow this link to get the full details. We’re pleased to have a wide range of contributions on an array of technologies, from eye input to digital memories via mental workload monitoring, implicit interaction, robotics, biofeedback and cultural heritage. Thanks to all our contributors and the staff at Springer who helped us along the way.

Reflections on first International Conference on Physiological Computing Systems

![]()

Last week I attended the first international conference on physiological computing held in Lisbon. Before commenting on the conference, it should be noted that I was one of the program co-chairs, so I am not completely objective – but as this was something of a watershed event for research in this area, I didn’t want to let the conference pass without comment on the blog.

The conference lasted for two-and-a-half days and included four keynote speakers. It was a relatively small meeting with respect to the number of delegates – but that is to be expected from a fledgling conference in an area that is somewhat niche with respect to methodology but very broad in terms of potential applications.

What kind of Meaningful Interaction would you like to have? Pt 1

A couple of years ago we organised this CHI workshop on meaningful interaction in physiological computing. As much as I felt this was an important area for investigation, I also found the topic very hard to get a handle on. I recently revisited this problem in working on a co-authored book chapter with Kiel on our forthcoming collection for Springer entitled ‘Advances in Physiological Computing’ due out next May.

On reflection, much of my difficulty revolved around the complexity of defining meaningful interaction in context. For systems like BCI or ocular control, where input control is the key function, the meaningfulness of the HCI is self-evident. If I want an avatar to move forward, I expect my BCI to translate that intention into analogous action at the interface. But biocybernetic systems, where spontaneous psychophysiology is monitored, analysed and classified, are a different story. The goal of this system is to adapt in a timely and appropriate fashion and evaluating the literal meaning of that kind of interaction is complex for a host of reasons.

The Epoc and Your Next Job Interview

Imagine you are waiting to be interviewed for a job that you really want. You’d probably be nervous, fingers drumming the table, eyes restlessly staring around the room. The door opens and a man appears, he is wearing a lab coat and he is holding an EEG headset in both hands. He places the set on your head and says “Your interview starts now.”

This Philip K Dick scenario became reality for intern applicants at the offices of TBWA who are an advertising firm based in Istanbul. And thankfully a camera was present to capture this WTF moment for each candidate so this video could be uploaded to Vimeo.

The rationale for the exercise is quite clear. The company want to appoint people who are passionate about advertising, so working with a consultancy, they devised a test where candidates watch a series of acclaimed ads and the Epoc is used to measure their levels of ‘passion’ ‘love’ and ‘excitement’ in a scientific and numeric way. Those who exhibit the greatest passion for adverts get the job (this is the narrative of the movie; in reality one suspects/hopes they were interviewed as well).

I’ve seen at least one other blog post that expressed some reservations about the process.

Let’s take a deep breath because I have a whole shopping list of issues with this exercise.

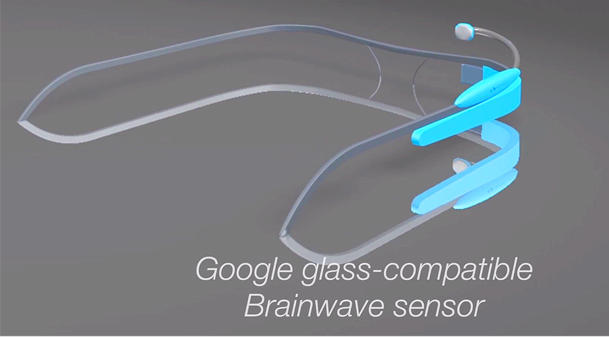

Redundancy, Enhancement and the Purpose of Physiological Computing

There has been a lot of tweets and blogs devoted to an article written recently by Don Norman for the MIT Technology Review on wearable computing. The original article is here, but in summary, Norman points to an underlying paradox surrounding Google Glass etc. In the first instance, these technological artifacts are designed to enhance human abilities (allowing us to email on the move, navigate etc.), however, because of inherent limitations on the human information processing system, they have significant potential to degrade aspects of human performance. Think about browsing Amazon on your glasses whilst crossing a busy street and you get the idea.

The paragraph in Norman’s article that caught my attention and is most relevant to this blog is this one.

“Eventually we will be able to eavesdrop on both our own internal states and those of others. Tiny sensors and clever software will infer their emotional and mental states and our own. Worse, the inferences will often be wrong: a person’s pulse rate just went up, or their skin conductance just changed; there are many factors that could cause such things to happen, but technologists are apt to focus upon a simple, single interpretation.”

Comfort and Comparative Performance of the Emotiv EPOC

I’ve written a couple of posts about the Emotiv EPOC over the years of doing the blog, from user interface issues in this post and the uncertainties surrounding the device for customers and researchers here.

The good news is that research is starting to emerge where the EPOC has been systematically compared to other devices and perhaps some uncertainties can be resolved. The first study comes from the journal Ergonomics from Ekandem et al and was published in 2012. You can read an abstract here (apologies to those without a university account who can’t get behind the paywall). These authors performed an ergonomic evaluation of both the EPOC and the NeuroSky MindWave. Data was obtained from 11 participants, each of whom wore either a Neurosky or an EPOC for 15min on different days. They concluded that there was no clear ‘winner’ from the comparison. The EPOC has 14 sites compared to the single site used by the MindWave hence it took longer to set up and required more cleaning afterwards (and more consumables). No big surprises there. It follows that signal acquisition was easier with the MindWave but the authors report that once the EPOC was connected and calibrated, signal quality was more consistent than the MindWave despite sensor placement for the former being obstructed by hair.

Data Trading, Body Snooping & Insight from Physiological Data

If there are two truisms in the area of physiological computing, they are: (1) people will always produce physiological data and (2) these data are continuously available. The passive nature of physiological monitoring and the relatively high fidelity of data that can be obtained is one reason why we’re seeing physiology and psychophysiology as candidates for Big Data collection and analysis (see my last post on the same theme). It is easy to see the appeal of physiological data in this context, to borrow a quote from Jaron Lanier’s new book “information is people in disguise” and we all have the possibility of gaining insight from the data we generate as we move through the world.

If I collect physiological data about myself, as Kiel did during the bodyblogger project, it is clear that I own that data. After all, the original ECG was generated by me and I went to the trouble of populating a database for personal use, so I don’t just own the data, I own a particular representation of the data. But if I granted a large company or government access to my data stream, who would own the data?

Big Physiological Data

I attended a short conference event organised by the CEEDs project earlier this month entitled “Making Sense of Big Data.” CEEDS is an EU-funded project under the Future and Emerging Technology (FET) Initiative. The project is concerned with the development of novel technologies to support human experience. The event took place at the Google Campus in London and included a range of speakers talking about the use of data to capture human experience and behaviour. You can find a link about the event here that contains full details and films of all the talks including a panel discussion. My own talk was a general introduction to physiological computing and a statement of our latest project work.

It was a thought-provoking day because it was an opportunity to view the area of physiological computing from a different perspective. The main theme being that we are entering the age of ‘big data’ in the sense that passive monitoring of people using mobile technology grants access to a wide array of data concerning human behaviour. Of course this is hugely relevant to physiological monitoring systems, which tend towards high-resolution data capture and may represent the richest vein of big data to index the human experience.

First International Conference on Physiological Computing

If there is a problem for academics working in the area of physiological computing, it can sometimes be a problem finding the right place to publish. By the right place, I mean a forum that is receptive to multidisciplinary research and where you feel confident that you can reach the right audience. Having done a lot of reviewing of physiological computing papers, I see work that is often strong on measures/methodology but weak on applications; alternatively papers tend to focus on interaction mechanics but are sometimes poor on the measurement side. The main problem lies with the expertise of the reviewer or reviewers, who often tend to be psychologists or computer scientists and it can be difficult for authors to strike the right balance.

For this reason, I’m writing to make people aware of The First International Conference on Physiological Computing to be held in Lisbon next January. The deadline for papers is 30th July 2013. A selected number of papers will be published by Springer-Verlag as part of their series of Lecture Notes in Computer Science. The journal Multimedia Tools & Applications (also published by Springer) will also select papers presented at the conference to form a special issue. There is also a special issue of the journal Transactions in Computer-Human Interaction (TOCHI) on physiological computing that is currently open for submissions, the cfp is here and the deadline is 20th December 2013.

I should also plug a new journal from Inderscience called the International Journal of Cognitive Performance Support which has just published its first edition and would welcome contributions on brain-computer interfaces and biofeedback mechanics.